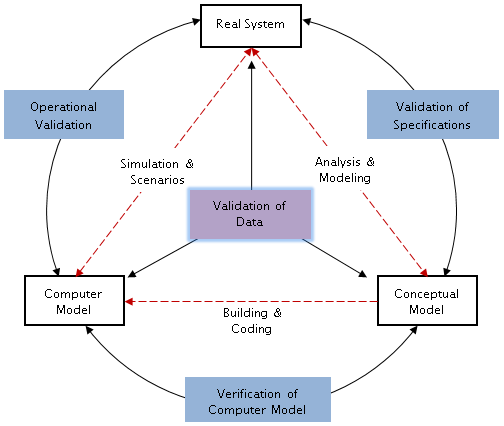

Model validation aims to justify that the model, within its specific scope of application, has a level of accuracy towards the real system that complies with the objectives of the simulation – and this should be checked almost at every stage of the project.

Model verification aims to ensure the computer model was correctly developed, targeting both the features of the software and the skills of the modeler. Obviously, a poorly trained user of an inadequate tool compromises right away the trust one can put in any industrial simulation model!

We list here some tools and tests that can be used for validation as for verification.

More indicators

Many, many indicators are a useful help for tracing and understanding what is happening in a model. To complement the indicators defined in the technical specifications, and those we recommend, you should add some indicators to check internal rules and flow consistency. The more you put relevant indicators in a model, the more you will be able to follow what is exactly happening, detect possible bugs, incorrect values or blockages.

When testing, add tracing tools and historical reporting: at specific points of flow routes, to produce a material balance, files could be used to record transit times, with relevant properties of the flow. Analyzing these data will confirm internal rules are correctly executed, or explain why decisions are not taken as expected.

With the progress of verifications, quite a number of indicators will be discarded, of course.

Comparisons

Simulation results will usefully be compared to:

- Results from analytical calculations and from various analyses made using other tools in preparation of the simulation.

- Previous simulation models, if any for a same scope.

- Historical data available about the real system – even if it means playing a scenario not required in specifications, but for which consistent historical data exist.

Visual aids

Animation: an animated model graphically shows its behavior as time goes by, thus providing an immediate and intuitive feedback. It is generally easy to implement animation while building the model, and it enables incremental corrections.

Graphs: numerous values in a model can be measured and plotted in a graph during simulation. It is an easy way to verify changes in indicators, the size of a queue or a percentage of available counters. A glance at the curve is enough to spot an inconsistency.

Sets of tests

The model should comply with a selection of input values and internal parameters. Taking a simple illustration with waiting queues simulation:

- Sensitivity test: Modifying input values and internal parameters to see how the model reacts, and the consequences on results. Example: if the customers’ arrival rate increases, it should cause a rise in counter utilization, and a longer waiting time. A quantitative analysis of the differences in results is recommended.

- Test with constant values: Applying a constant arrival rate, the utilization rate is easily calculated, and confronted to the rate produced by the model.

- Consistency test: Verifying model consistency by running several replications of the simulation; results should be almost identical.

- Test in extreme conditions: Model results must remain plausible even in extreme, improbable or even absurd conditions. Example: if the customers’ arrival rate is zero then the number of attended customers must be zero too. But if the customers’ arrival rate is infinite then the number of attended customers should not be infinite.

On very big models with numerous parameters, as it is not possible to apply all tests, a selection of tests will be made, focusing on main indicators or on critical processes in the system.

Enough replications

A model cannot be declared valid if it ran once without bugs and with acceptable indicators. If the system has any randomness in its parameters, consistency in results must be ensured by running several replications with different random seeds, until a convergence of results is observed. On the other hand, if several scenarios are considered, the random seed should be blocked to be able to see the true influence of each tested parameters without the blur of randomness – but testing again with other seeds once the action of each parameter is confirmed…

Conclusion

With rigor and critical sense, with consensus and good will, it is possible to reach this confidence level required by a simulation approach.

If you apply all our advices and recipes, you have the best chances to produce a correct model! 😉